Author: Juan Vasquez

Date: April 2, 2026

AI adoption rarely begins with a steering committee.

It starts with a single person trying to clear a backlog. A senior associate drops a contract into a chatbot to generate a faster issue list. A compliance manager asks for a quick summary of a new regulation. Someone in finance uses an “AI assist” button that appeared overnight in a tool they have used for years. A project manager asks for meeting notes to be rewritten as action items, and the output is good enough that nobody asks what system produced it. Soon, prompts and outputs are traveling through email threads and shared drives. Draft language with a certain “smoothness” shows up in memos. A vendor demo quietly rebrands “search” as “AI answers,” and that new feature begins influencing decisions.

The pattern is familiar because it is ordinary: uneven, informal, and frequently invisible at the leadership level until it is already embedded in workflows. The data supports that reality. In 2024, Microsoft and LinkedIn reported that 75% of global knowledge workers were already using AI at work, and that many employees were bringing their own AI tools into the workplace rather than waiting for formal rollout.1

This is not a crisis narrative. It is an adoption narrative. But unmanaged or uneven adoption creates real risks in professional settings. Generative AI systems can produce “confidently stated but erroneous or false content,” a phenomenon NIST calls “confabulation”2 and notes is also referred to as “hallucinations” or “fabrications.”3 Confabulation matters because it can look like competence. It can also be paired with overreach, meaning a tool is used beyond the context where its limitations are understood, or beyond what policy, contract, or professional obligations allow.

Just as importantly, the risk surface is not limited to accuracy. NIST highlights that generative AI raises multiple privacy risks, including risks connected to the use of personal data in training and the handling of content in operation.4 Meanwhile, workforce behavior creates exposure when AI use is untracked or unsupervised. For example, late 2024 research publicized by CybSafe found that 38% of employees admitted sharing sensitive information with AI tools without their employer’s knowledge.5

In legal and regulated environments, those risks map directly onto duties and consequences: confidentiality and privilege, data protection, consumer protection, recordkeeping, procurement integrity, supervisory obligations, and candor to tribunals. Bar guidance has been explicit that lawyers may use generative AI, but they remain responsible for the work product and must protect client confidentiality and understand relevant tool policies such as data retention and sharing.6

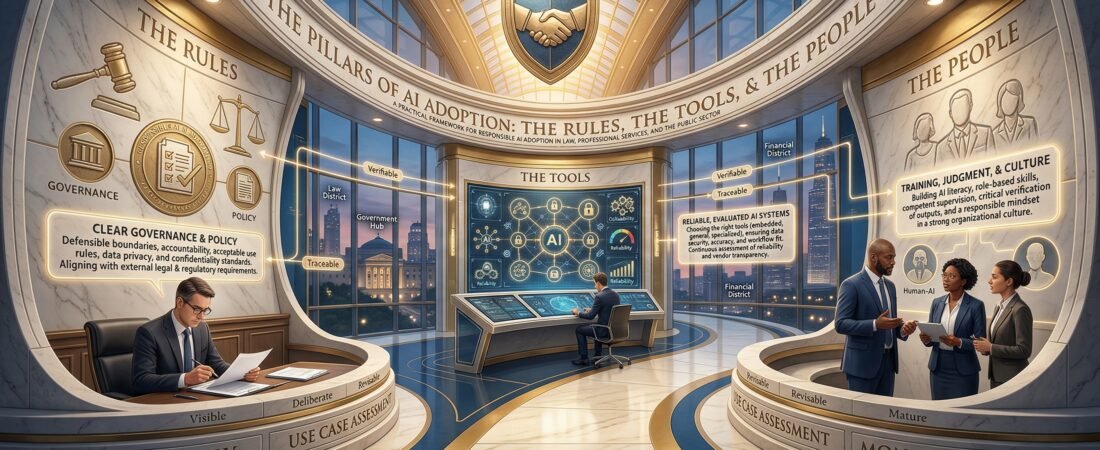

This is where many organizations get stuck. Some treat AI adoption as a technology procurement problem: select a tool, turn it on, and measure productivity. Others treat it as a governance exercise: draft a policy, publish it, and assume adoption is now “managed.” Both approaches fail because they describe only part of the system. 3ITAL’s framework, resting on the three pillars of AI adoption: the Rules, the Tools, the People, is built for this reality. Our thesis is simple: responsible and sustainable AI adoption requires aligned strength across all three pillars, because weakness in any one pillar will undermine the others

Why unmanaged AI adoption creates organizational risk

Unmanaged AI adoption is not just “shadow IT” with a new interface. It is a distinctive risk pattern driven by three dynamics: accelerated diffusion, uneven capability, and shifting tool behavior.

First, diffusion is fast because the perceived barrier is low. AI features are increasingly embedded into mainstream platforms, which means adoption can occur through normal updates, not formal procurement. Google announced the general availability of Gemini in the side panel of Docs, Sheets, Slides, and Drive, explicitly positioning it as an in-workflow assistant that can summarize, analyze, and generate content using insights from emails and documents.7 Microsoft similarly markets Copilot as built into familiar applications such as Word, Excel, Outlook, and PowerPoint.8 In practice, this means “AI use” may not look like bringing in a new vendor. It often looks like a feature toggle.

Second, capability is uneven. Some users have the judgment to treat generative AI as a drafting assistant that requires verification. Others treat it like an expert. The risk is amplified by how generative AI presents outputs: NIST’s definition of confabulation emphasizes confident presentation of false content, which can mislead users into acting on incorrect information.9 Bar guidance has flagged exactly this point in legal practice: lawyers must provide competent and accurate services and remain responsible for their professional judgment, even when using generative AI tools.10

Third, tool behavior shifts over time, which undermines static assumptions. Vendors add new models, new connectors, and new “assist” features; data handling terms can vary by product tier; output quality can change as models update. Even the New York City Bar’s 2024 opinion underscored that a summary of available tools would “likely soon be outdated because of the rapid evolution” of generative AI, and it explicitly noted that general-purpose platforms, legal research platforms, and even data management vendors offer generative AI capabilities.11

When these dynamics are not managed, familiar enterprise risks appear in new forms:

Confabulation and overreliance become operational risk: inaccurate summaries, invented citations, and plausible but wrong reasoning. NIST warns that confabulation can drive misinformation at scale, especially when users treat confident output as verified truth.12 In legal practice, the consequences have been concrete. In Mata v. Avianca, the court described attorneys submitting non-existent judicial opinions with fake citations generated by ChatGPT, and emphasized that existing rules impose a gatekeeping role on attorneys to ensure accuracy.13

Tool overreach and tool misalignment become governance risk: a tool used for a low-risk purpose gets repurposed into high-risk decision support without a corresponding change in controls. Many organizations discover, too late, that “summarize this” and “draft that” are not neutral acts when the input includes confidential, privileged, regulated, or security-sensitive information. NIST’s generative AI profile devotes an entire section to data privacy risks, noting that the training, development, and deployment of generative AI systems can raise privacy concerns.14

Privacy and confidentiality failures become accountability risk: the core issue is not only malicious misuse, but normal work behavior. When employees use unapproved tools, the organization often cannot answer basic questions: What data was entered? Was it retained? Who can access it? Can the organization honor deletion requests, litigation holds, or public records obligations? This is not hypothetical in workforce data. CybSafe’s reported findings on sensitive information shared without employer knowledge illustrate how easily confidentiality boundaries can be crossed in practice.15

Vendor opacity becomes contractual and compliance risk: procurement teams, legal teams, and compliance teams increasingly face model terms, data processing terms, and subprocessor chains that were never contemplated when the “AI feature” appeared. The Federal Trade Commission has repeatedly signaled that AI is not exempt from existing law and that privacy and confidentiality representations matter.16 Finally, inconsistent or undocumented use becomes an audit and defensibility problem. In government contexts, the policy response has been explicit. OMB’s Memorandum M-24-10 establishes governance requirements and directs agencies to inventory AI use cases, report risks for certain categories, and apply minimum risk management practices for AI that impacts rights and safety.17 Even outside government, the message is transferable: you cannot govern what you cannot see.

The 3ITAL Pillars of AI Adoption

The 3ITAL Pillars framework treats AI adoption as an organizational system, not a software deployment.

The pillars are:

- The Rules: governance, policy, and accountability mechanisms that work in real workflows, not just on paper.

- The Tools: the actual AI systems people use, including embedded features, and the operational and contractual realities around them.

- The People: training, readiness, judgment, supervision, and culture, meaning how the workforce actually behaves when AI makes work faster.

The key insight is interdependence. NIST’s generative AI profile explicitly connects risk management to governance, mapping, measurement, and management across the AI lifecycle, and it includes the need to define acceptable use policies for generative AI interfaces and configurations.18 That kind of guidance implicitly assumes all three pillars: policies (rules), evaluated systems (tools), and human configuration and oversight (people).19

The same interdependence shows up in government accountability and standards work. GAO’s AI accountability framework organizes responsible AI use around governance, data, performance, and monitoring, all of which require both institutional controls and human practice.20 ISO/IEC 42001 similarly describes an AI management system standard built around establishing policies and objectives and maintaining continual improvement, which is a governance-and-operations lens rather than a single-tool lens.21

At a practical level, each pillar answers a different organizational question:

- The Rules answer: What is allowed, for whom, for what purpose, under what constraints, with what accountability?

- The Tools answer: What systems are we actually using, what do they do with data, how reliable are they for defined uses, and how do they fit our workflows?

- The People answer: Do our teams understand the limits, the rules, and the consequences, and do they have the habits to use AI responsibly under time pressure?

The Rules

Rules are the difference between “we have AI” and “we can defend how we use AI.”

In professional environments, “rules” should be anchored in concrete policy instruments and operational governance, not abstract principles. At minimum, most organizations need an AI usage policy and a data privacy policy that explicitly addresses AI-enabled workflows, including embedded AI features.

NIST’s generative AI profile provides a practical cue: it calls for defining acceptable use policies for generative AI interfaces, modalities, and human-AI configurations, including criteria for what kinds of queries systems should refuse.22 That is not “governance theater.” It is a recognition that generative AI changes what people can ask, what they can extract, and how quickly they can disseminate content.

Rules also need to align with external obligations. In the legal profession, this is not optional. ABA Model Rule 1.1’s Comment [8] states that lawyers should keep abreast of changes in law and practice, including the benefits and risks associated with relevant technology.23 ABA Model Rule 1.6 establishes the confidentiality baseline, and ABA Formal Opinion 512 emphasizes that lawyers and law firms using generative AI must consider duties including competence, confidentiality, communication, and fees.24 State and local guidance reinforces that message. The Florida Bar’s Opinion 24-1 instructs lawyers to protect confidentiality by researching a program’s policies on data retention, sharing, and “self-learning,” and it stresses that lawyers remain responsible for their work product and judgment.25

In government and regulated sectors, the same concept appears in different clothing: inventories, governance bodies, and minimum practices. OMB’s M-24-10 requires agencies to designate a Chief AI Officer, convene governance bodies, inventory AI use cases, and apply minimum risk management practices for safety- and rights-impacting AI.26 Those requirements are not about paperwork; they are about enforceable accountability in operational systems.

A well-built “Rules” pillar typically specifies: permitted vs prohibited uses, required human review and verification steps, data handling boundaries (including “no sensitive data in unapproved tools”), documentation expectations, escalation pathways when something goes wrong, and supervisory responsibility. These are the rules that survive contact with real work.

The Tools

Tools determine the real boundaries of AI adoption, even when policies say otherwise.

Organizations often talk about AI tools as interchangeable, as if “a chatbot is a chatbot.” In practice, tools differ across reliability, data handling, workflow fit, and vendor transparency. Those differences are not cosmetic. They determine whether rules can be followed and whether people can make good decisions under time constraints.

Start with reliability. NIST’s generative AI profile frames confabulation as a core risk, defining it as the production of confidently stated but false content that can mislead or deceive users.27 It also explicitly recommends actions such as reviewing and verifying sources and citations in generative AI outputs and avoiding extrapolating system capabilities from narrow or anecdotal assessments.28 In other words: if an organization does not understand the limits of its tools, it is effectively walking through a minefield with a map drawn after the fact.

Now consider data handling. NIST’s generative AI profile describes privacy risks linked to the scale of training data and the possibility that personal data may be involved.29 At the same time, vendors differentiate product tiers with different privacy postures. For example, Google’s Workspace AI page claims that organizational data “is not used to train Gemini models or for ads targeting” and emphasizes enterprise controls such as DLP.30 Whether and how an organization can rely on such statements is a governance and contracting question, but the core point is operational: tool selection directly affects data risk.

Workflow integration is another tool risk. When generative AI is placed inside email, document, and collaboration suites, the convenience is real and so is the exposure. Google’s announcement about Gemini in the side panel highlights that it can generate and summarize content using existing Workspace information without switching applications.31 Microsoft markets Copilot as embedded across common productivity apps.32 Embedded AI changes the default behavior of work. It increases the volume of “AI touched” content, and it can blur the line between human authored and AI assisted text in records, filings, and formal communications.

Finally, tools exist beyond what IT approves. IBM defines “shadow AI” as the unsanctioned use of AI tools without formal approval or oversight.33 Workforce behavior and tool accessibility create a simple reality: if approved tools are poor, slow, or mismatched to real needs, people will route around them.

For the Tools pillar to be strong, organizations need an inventory of what is actually being used, a risk assessment by use case, contractual and privacy diligence, and a method to manage vendor feature changes over time. In high-trust professions, a “good enough” tool is rarely good enough if the consequences of error, leakage, or misuse are severe.

The People

People are not a “soft” pillar. They are the operational control layer.

Even the best policy can be defeated by normal behavior under deadline: copying sensitive text into an unapproved tool for speed, skipping verification because output “looks right,” or using AI beyond one’s competence because it reduces friction.

Professional responsibility guidance treats this as a competence and supervision issue. ABA Model Rule 1.1’s Comment [8] explicitly links competence to understanding the benefits and risks of relevant technology.34 ABA Formal Opinion 512 and state guidance similarly emphasize that lawyers must remain responsible for accuracy and confidentiality, and they must consider how tool use affects duties to clients.35

The public sector has also begun treating workforce readiness as a governance requirement. The EU AI Act discusses “AI literacy” as a way to obtain benefits while protecting rights and safety, and it frames literacy as equipping providers, deployers, and affected persons with necessary insights to ensure compliance and correct enforcement.36 This is a useful signal for non-EU organizations as well: regulators increasingly expect not only technical controls, but informed human practice.

In the U.S. federal context, OMB’s M-24-10 requires each agency to designate a Chief AI Officer and specifies that these CAIOs must have the “skills, knowledge, training, and expertise” to carry out required responsibilities.37 The presence of training language in governance policy is telling. The assumption is that AI governance is not self-executing.

A strong People pillar includes role-based training (not generic “AI 101”), supervision requirements, documented review expectations, and culture signals that reinforce verification, escalation, and transparency. It also includes clear expectations about what AI can and cannot replace. Florida’s Opinion 24-1 captures the baseline reminder: lawyers remain responsible for their work product and professional judgment, and should verify that AI use aligns with ethical obligations.38

Why one strong pillar cannot save a weak system

Organizations often try to “win” with one pillar. They cannot.

A strong Tools pillar without Rules and People usually produces fast adoption and slow regret. If AI is embedded in high-trust workflows without clear policy and trained review discipline, confabulation becomes a quality surprise, and data leakage becomes an incident that appears only after harm occurs. NIST’s own recommended actions for confabulation emphasize verification of sources and citations, which is a human practice that must be trained and enforced, not merely suggested.39

A strong Rules pillar without Tools and People becomes governance that people route around. If the approved tools are clunky or misaligned, informal adoption grows. Microsoft and LinkedIn reported that many employees bring their own AI tools to work, which is a predictable outcome when demand exceeds official supply.40 If rules read as “no,” but work reality requires “yes,” the organization gets neither compliance nor insight. It gets invisibility.

A strong People pillar without Rules and Tools becomes heroics. You can train a workforce to be careful, but if policies are unclear and tools are inconsistent, individuals are forced to improvise. That is not scalable. It is also not defensible in audits, disputes, or regulatory inquiries, because “we rely on good judgment” is not, by itself, a control environment.

Concrete examples make the point.

In legal practice, Mata v. Avianca illustrates what happens when people rely on an AI output without verification. The court described submission of fake opinions and emphasized the attorney gatekeeping role for accuracy.41 That failure is not only a “People” failure. It is also a “Rules” failure (insufficient verification expectations) and a “Tools” failure (a system capable of confabulation being used in a context where citation accuracy is mandatory).

In government, GAO reported that from 2023 to 2024, selected agencies’ generative AI use cases increased about ninefold, and officials reported challenges including complying with federal policies while keeping up with rapidly evolving technology and maintaining up-to-date appropriate use policies.42 That is not a “tool problem” alone. It is the coordination challenge of all three pillars under a fast-moving adoption curve.

The central lesson is the same across sectors: one pillar can slow failure, but it cannot prevent failure when the system is imbalanced.

What responsible AI adoption looks like in practice

Responsible adoption is visible, deliberate, and revisable. It does not require perfection, but it does require maturity signals that can be observed in operations.

First, mature organizations can answer “what are we using?” without guessing. This is inventory discipline. In the U.S. federal context, OMB requires agencies to maintain AI use case inventories and report additional risk detail for certain categories of AI, reinforcing the governance value of visibility.43 GAO’s accountability framework similarly treats monitoring as a core principle, including continuous or routine monitoring plans appropriate to each use case and risks such as bias and privacy.44

Second, mature organizations define approved tools and defined use cases, then align controls to the risk of each use case. The NIST AI RMF is positioned as a voluntary framework to improve how organizations incorporate trustworthiness considerations into design, development, use, and evaluation.45 Its generative AI profile operationalizes this by identifying key risk categories, including confabulation and data privacy, and by proposing concrete actions across the lifecycle.46 The practical takeaway for most institutions is not to adopt the entire catalog, but to adopt the posture: risk-based governance connected to implementation.

Third, mature organizations publish workable rules that match daily reality. The best AI usage policies are written in the language of actual tasks: summarizing documents, drafting emails, generating first-pass research, creating checklists, generating code snippets, producing client-facing content, or automating internal routing. NIST explicitly calls for acceptable use policies for generative AI interfaces and configurations.47 In legal environments, bar opinions highlight the need to understand tool policies (data retention and sharing), protect confidentiality, and verify outputs for accuracy.48

Fourth, mature organizations treat privacy and confidentiality boundaries as product requirements, not training tips. If a tool cannot meet the organization’s data handling needs, it is not an “approved tool,” regardless of how effective it is. This is where tool differentiation matters. Google’s enterprise claims about not using Workspace customer data to train Gemini models illustrates how vendors compete on data assurances and certifications, which becomes material in tool evaluation.49 NIST’s privacy risk discussion reinforces why these features matter: generative AI raises specific privacy risks given data scale and operational use.50

Fifth, mature organizations formalize people practices: role-based training, human review expectations, and escalation pathways. The EU AI Act’s discussion of AI literacy as a tool for enabling benefits while protecting rights and safety demonstrates that policymakers see training and understanding as part of the compliance ecosystem, not simply a best practice.51 In the U.S., OMB’s CAIO requirements explicitly reference the necessary skills and training to lead AI governance.52 In the legal profession, competence standards explicitly incorporate technology risk awareness.53

Finally, mature organizations build a feedback loop for drift. AI adoption is not “set and forget.” Models change. Vendors add connectors. New features appear in existing platforms. GAO’s monitoring principle, NIST’s lifecycle framing, and ISO’s management system logic all point toward continuous reassessment.54 A practical operational signal is a recurring review cadence for approved tools and high-risk use cases, paired with documented updates to policy, training, and technical controls.

In this sense, responsible AI adoption is less like buying a new application and more like building an organizational capability. The capability is measurable: visibility, defined use cases, enforceable rules, risk-aware tools, trained practice, and continuous monitoring.

Conclusion

Most organizations are no longer deciding whether AI will enter their workflows. In many settings, it already has. The more relevant decision is whether AI will enter as a governed capability or as unmanaged drift.

Evidence from workplaces and government reinforces why this matters. Microsoft and LinkedIn’s reporting on widespread AI use at work, including employee-driven adoption, suggests that demand routinely outpaces formal rollout.55 GAO’s reporting on rapid growth in federal generative AI use cases shows that even highly regulated institutions are moving quickly, while struggling to keep policies current and aligned.56

The 3ITAL Pillars framework is designed for this reality. The Rules provide defensible boundaries and accountability. The Tools determine what is practically possible and what risks are imported. The People determine whether the system is used responsibly when deadlines and incentives apply. When the pillars are aligned, organizations do not merely adopt AI. They adopt AI in a way they can explain, audit, improve, and defend.

[1][40][55]. Microsoft and LinkedIn, 2024 Work Trend Index reporting on AI use at work (May 8, 2024) available at https://news.microsoft.com/source/2024/05/08/microsoft-and-linkedin-release-the-2024-work-trend-index-on-the-state-of-ai-at-work/

[2]. For a discussion on the difference between confabulation and hallucination, we refer the reader to a great articles at https://www.integrative-psych.org/resources/confabulation-not-hallucination-ai-errors and https://www.wolterskluwer.com/en/expert-insights/hallucination-vs-confabulation-why-difference-matters-in-healthcare-ai

[3][4][9][12][14][18][19][22][27][28][29][39][46][47][50]. National Institute of Standards and Technology, Artificial Intelligence Risk Management Framework: Generative Artificial Intelligence Profile (NIST AI 600-1) (July 26, 2024), available at https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf

[5][15]. CybSafe press release on employee sharing of sensitive information with AI tools without employer knowledge (September 26, 2024), available at https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf

[6][10][25][38][48]. The Florida Bar, Ethics Opinion 24-1, Lawyers’ Use of Generative Artificial Intelligence (January 19, 2024), available at https://www.floridabar.org/etopinions/opinion-24-1/

[7][31]. Google, Google Workspace update announcing Gemini side panel integration (June 24, 2024), available at https://workspaceupdates.googleblog.com/2024/06/gemini-in-side-panel-of-google-docs-sheets-slides-drive.html

[8][33]. IBM, definition of “shadow AI” (unsanctioned use of AI tools without IT approval), available at https://www.ibm.com/think/topics/shadow-ai

[11]. New York City Bar Association, Formal Opinion 2024-5, Generative AI in the Practice of Law (August 7, 2024), available at https://www.nycbar.org/reports/formal-opinion-2024-5-generative-ai-in-the-practice-of-law/

[13][41]. Mata v. Avianca, Inc., 678 F. Supp. 3d 443 (S.D.N.Y. 2023) (sanctions decision discussing fake citations generated by ChatGPT and the duty of attorney verification), available at https://www.law.berkeley.edu/wp-content/uploads/2025/12/Mata-v-Avianca-Inc.pdf

[16]. Federal Trade Commission, AI Companies: Uphold Your Privacy and Confidentiality Commitments (Jan. 9, 2024), available at https://www.ftc.gov/policy/advocacy-research/tech-at-ftc/2024/01/ai-companies-uphold-your-privacy-confidentiality-commitments

[17][26][37][43][52]. Office of Management and Budget, Memorandum M-24-10, Advancing Governance, Innovation, and Risk Management for Agency Use of Artificial Intelligence (March 28, 2024), available at https://www.whitehouse.gov/wp-content/uploads/2024/03/M-24-10-Advancing-Governance-Innovation-and-Risk-Management-for-Agency-Use-of-Artificial-Intelligence.pdf

[20][42][44][54][56]. U.S. Government Accountability Office, Artificial Intelligence: An Accountability Framework for Federal Agencies and Other Entities (GAO-21-519SP) (June 2021), available at https://www.gao.gov/assets/gao-21-519sp.pdf

[21]. International Organization for Standardization, ISO/IEC 42001 standard overview describing requirements for an AI management system, available at https://www.iso.org/standard/42001

[23][34][53]. American Bar Association, Model Rule 1.1 Comment [8] (maintaining competence, including technology risks), available at https://www.americanbar.org/groups/professional_responsibility/publications/model_rules_of_professional_conduct/rule_1_1_competence/comment_on_rule_1_1/

[24]. American Bar Association, Model Rules of Professional Conduct, Rule 1.6, Confidentiality of Information, available at https://www.americanbar.org/groups/professional_responsibility/publications/model_rules_of_professional_conduct/rule_1_6_confidentiality_of_information/

[30][49]. Google, Google Workspace AI page describing enterprise privacy posture and controls (accessed via search results), available at https://workspace.google.com/solutions/ai/

[32]. Microsoft, Microsoft 365 Copilot | Create, Share and Collaborate, available at https://www.office.com/

[35]. American Bar Association, newsroom release on Formal Opinion 512 (July 29, 2024), available at https://www.americanbar.org/news/abanews/aba-news-archives/2024/07/aba-issues-first-ethics-guidance-ai-tools/

[36][51]. Regulation (EU) 2024/1689 (Artificial Intelligence Act), including Recital on AI literacy and Article 113 (entry into force and phased application), available at https://idpc.org.mt/wp-content/uploads/2025/04/AI-Act.pdf

[45]. National Institute of Standards and Technology, AI Risk Management Framework, available at https://www.nist.gov/itl/ai-risk-management-framework